Introduction

Companies spend an average of $2,020 per rep on sales enablement annually. Yet 84% of reps missed quota last year, and 67% don't expect to hit it this year. The disconnect isn't a training budget problem, it's a diagnosis problem.

What separates high-performing sales teams from the rest is how precisely they identify skill gaps before deploying training. The competencies you measure, how you structure the evaluation, and what you do with the results afterward all determine whether your assessment drives change or collects dust. Without that diagnosis, training spend is largely guesswork.

TL;DR

- Sales skills assessments measure whether reps can perform behaviors that influence buyer decisions, not just what they know

- Effective assessments evaluate observable behaviors in realistic scenarios, anchored to a competency framework that matches your sales motion

- Running assessments at regular intervals tracks skill development over time, not just hiring readiness

- The biggest failures come from measuring the wrong competencies, using subjective scoring, and not connecting results to a coaching action plan

- Linked to win rates and deal outcomes, assessment data reveals exactly which skill gaps are costing revenue

What Sales Skills and Competencies Should You Actually Assess

Skills vs. Competencies: Understanding the Difference

A sales skill is a specific, teachable behavior, asking open-ended questions, handling objections, or articulating ROI. A sales competency is how a rep applies multiple skills together with judgment in real buyer situations. This distinction determines what your assessment should measure.

Competencies predict outcomes; individual skills alone do not. Assessing whether a rep can recite BANT criteria tests knowledge. Assessing whether they can qualify a deal under time pressure while managing stakeholder politics tests competency.

Core Competency Categories for B2B Sales Roles

The competencies worth assessing must tie directly to buyer decision-making moments. According to CEB/Gartner research, 53% of customer loyalty comes from the quality of the sales experience, not company reputation or product features. Your assessment should focus on:

Discovery and questioning:

- Ability to uncover unstated needs and pain points

- Use of open-ended vs. closed questions

- Depth of business context gathered before proposing solutions

Value messaging and storytelling:

- Connecting product capabilities to specific buyer outcomes

- Communicating ROI cases clearly and confidently

- Differentiating from competitors on value, not features

Objection handling and negotiation:

- Responding to price pressure without discounting

- Addressing concerns without becoming defensive

- Navigating multi-stakeholder objections

Pipeline discipline:

- CRM hygiene and deal qualification accuracy

- Forecasting realism

- Stage progression judgment

Relationship and account development:

- Stakeholder mapping and engagement

- Cross-sell and upsell identification

- Long-term account planning

New Business vs. Account Growth: Scope Matters

Competencies for new business acquisition differ from those needed for expansion and retention:

New business roles need:

- Prospecting persistence and messaging

- Discovery in unfamiliar buying environments

- Competitive differentiation under pressure

Account growth roles need:

- Stakeholder alignment across buying groups

- Renewal conversation skills

- Strategic account planning and value realization tracking

Assessments should be scoped to the rep's actual role, not a generic sales skillset. An SDR assessment focused on complex negotiation wastes time; an enterprise AE assessment without stakeholder navigation leaves critical gaps.

Validate With Your Top Performers

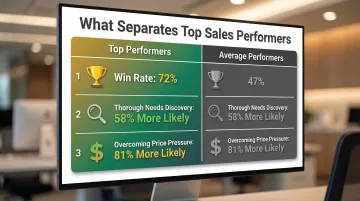

Identify the 3-5 behaviors that consistently separate your highest win-rate reps from average performers. According to RAIN Group research, top performers show measurable gaps over average reps across three key behaviors:

- 72% vs. 47% average win rate on proposed sales

- 58% more likely to lead thorough needs discoveries

- 81% more likely to overcome price pressure while maintaining margins

Build your assessment around whichever of these behaviors your own top performers share, that's where the signal is.

How to Create a Sales Skills Assessment for Reps

Step 1: Define the Purpose and Scope

Decide whether this assessment is for hiring/onboarding, periodic performance review, or pre-kickoff gap analysis. The purpose determines format, depth, and cadence.

Scope by role:

- Sales Development Reps (SDRs): Focus on outbound prospecting, qualifying conversations, and objection handling

- Account Executives (AEs): Emphasize complex deal navigation, multi-stakeholder alignment, and value articulation

- Channel/Partner Sales Reps: Include partner enablement dynamics, co-selling skills, and distributor relationship management

Getting this right upfront keeps the assessment focused, and makes the results actually usable.

Step 2: Map Competencies to Observable Behaviors

For each competency you've chosen to assess, define 2-3 specific, observable behaviors that a rep would demonstrate when performing that skill well. These become the foundation for your scoring rubric.

Avoid vague criteria like:

- "Strong communicator"

- "Good listener"

- "Effective closer"

Instead, define observable indicators such as:

- Asks clarifying questions before proposing a solution

- Connects product capability to a specific buyer pain point without prompting

- Summarizes next steps and confirms decision-maker alignment before ending the call

The more specific the indicator, the less room there is for scorer disagreement, which matters at scale.

Step 3: Select the Right Assessment Format

Choose the format that matches the competency you're evaluating:

Knowledge-based quizzes:

- Best for product knowledge, process adherence, methodology frameworks

- Fast to deploy and score

- Limited predictive validity for actual sales performance

Role-play or simulated sales calls:

- Highest predictive validity for behavioral skills (correlation of .45 to .63 with job performance)

- Tests applied competency in controlled scenarios

- Requires standardized scenarios and trained evaluators

Written response exercises:

- Useful for strategic thinking, account planning, and communication clarity

- Less effective for measuring real-time judgment or conversational agility

AI-powered call scoring:

- Continuous, real-time assessment layer across live conversations

- Flags skill gaps deal-by-deal rather than waiting for scheduled reviews

- Complements periodic structured assessments by providing ongoing behavioral tracking

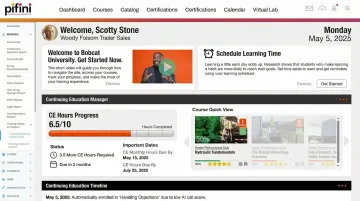

Platforms like Pifini bring this to life with AI call scoring that evaluates discovery quality, objection handling, product positioning, and closing strength on every call. Gaps are flagged automatically, and reps are routed into targeted training without waiting for a scheduled review cycle.

Step 4: Build Standardized Scenarios and Scoring Rubrics

Every rep must face the same scenario, persona, and decision context. Without that consistency, results can't be compared, and coaching decisions drift toward gut feel rather than evidence.

Create scenarios that:

- Reflect real buyer situations your reps encounter

- Include realistic objections, constraints, and decision dynamics

- Specify the persona, their goals, and their context

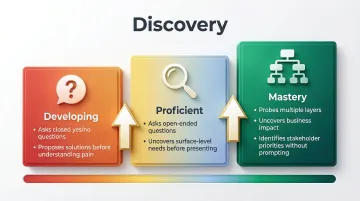

Build scoring rubrics that distinguish clearly between levels:

| Competency Level | Indicator Example (Discovery) |

|---|---|

| Developing | Asks closed yes/no questions; proposes solutions before understanding pain |

| Proficient | Asks open-ended questions; uncovers surface-level needs before presenting |

| Mastery | Probes multiple layers; uncovers business impact and stakeholder priorities without prompting |

Include example indicators for each level so managers score consistently regardless of personal bias.

Step 5: Deploy the Assessment and Collect Results

Rollout logistics:

- Communicate the purpose in advance: frame it as a growth tool, not a performance review trap

- Set realistic time limits and ensure controlled conditions, same setup, same instructions, no coaching mid-assessment

- Use the same evaluators or calibrate multiple scorers before deployment

Aggregate results into a shared format that shows:

- Individual scores by competency

- Team-level averages

- Variance between top and bottom performers

This comparison layer turns raw scores into actionable insight. If your top quartile scores 4.2/5 on discovery but the bottom quartile scores 1.8, you've identified a high-impact coaching opportunity.

Key Variables That Affect Assessment Quality

Competency Relevance

Assessments built on generic sales skills lists produce noise, not signal. Regularly validate your framework against actual deal outcomes and buyer feedback. If buyers cite "clear ROI articulation" as most influential in their purchasing decision, but your assessment doesn't measure it, you're tracking the wrong variables.

Scoring Consistency

The more subjective your rubric, the less reliable your data. Research shows that idiosyncratic rater effects account for over 50% of rating variance, meaning most manager scores reflect the manager's biases, not the rep's performance.

Build clear behavioral anchors for each scoring level. If multiple managers are scoring, run a calibration session before deployment to align their judgment.

Assessment Frequency

A single baseline assessment tells you where reps started; repeated assessments on a quarterly or semi-annual cadence tell you whether training and coaching are actually changing behavior.

The forgetting curve hits fast. Without reinforcement:

- 70% of training information is forgotten within a week

- 87% is gone within a month

- Half of initial skill gains disappear within 6.5 months without practice

Regular reassessment catches this decay before it impacts revenue.

Common Mistakes When Building Sales Skills Assessments

Assessing Confidence Instead of Competence

Self-assessments and manager ratings are the most common pitfall. Both are heavily biased toward perception rather than observable behavior. Research consistently shows an average correlation of only 0.29 between self-assessments and external performance standards, and lower performers are the most likely to overestimate their abilities.

If your method relies on "how do you feel about your prospecting skills," the data is unreliable before it's collected.

Testing Knowledge Without Testing Application

Quizzing reps on methodology frameworks or product features tells you what they've memorized, not whether they can use it in a live sales conversation. Knowledge tests should supplement behavioral assessments, not substitute for them.

74% of organizations use performance assessments because they more effectively measure learning outcomes than knowledge tests alone.

Skipping the Scoring Rubric

Deploying scenarios without defined, calibrated scoring criteria means every manager scores differently. The result is inconsistent data that cannot be used to make fair or accurate coaching decisions at scale.

A basic rubric, for example, might define a "3 out of 5" on objection handling as: acknowledges the concern, asks a clarifying question, and pivots to value without discounting. That level of specificity is what makes assessment data actionable.

What to Do With Sales Skills Assessment Results

Connect Competency Scores to Deal Outcomes

Once assessments are complete, the data only becomes useful when it's tied to revenue outcomes. Cross-reference each rep's scores with their win rate, average deal size, and pipeline velocity from your CRM. This surfaces which skill gaps are most directly tied to lost revenue, and tells you where coaching will have the highest ROI.

Organizations that align coaching to competency gaps see a 32.1% improvement in win rates and a 27.9% improvement in quota attainment compared to random coaching approaches.

Group Reps Into Coaching Cohorts by Shared Skill Gap

Use assessment data to cluster reps who share the same competency gaps, then assign targeted training paths and relevant coaches. This prevents generic enablement sessions and ensures learning is matched to actual need.

Platforms that combine LMS with AI call scoring, like Pifini, can automate this by:

- Auto-enrolling reps into prescriptive training tracks based on assessment results

- Continuously flagging skill gaps between formal reviews using AI call scoring

- Tracking whether reps improve performance metrics after completing training

Reassess on a Regular Cadence and Close the Feedback Loop

Build a recurring review cycle. Track improvement scores over time and pair periodic assessments with real-time call data to catch regression or confirm growth between formal reviews.

Only 35% of companies measure whether skills are applied on the job after training. Combining formal assessment with continuous verification closes that gap, and gives you the data needed to optimize enablement spend and business performance.

Frequently Asked Questions

How to assess sales skills?

Effective sales skill assessment requires observing reps in realistic, standardized scenarios, role-plays, simulated calls, or AI-scored live calls, rated against a behavioral rubric tied to specific sales competencies. Avoid relying solely on self-ratings or knowledge quizzes, which have low predictive validity for actual performance.

What are the 7 steps of selling skills?

Most B2B sales processes cover prospecting, qualifying, needs discovery, solution presentation, handling objections, closing, and follow-up/account development. A strong assessment should evaluate rep capability across the stages most critical to their specific role, not all seven equally.

What are the 5 assessment tools?

The main assessment tool categories are knowledge-based tests, role-play or simulation assessments, AI-powered call scoring and analysis, manager observation rubrics, and buyer/deal feedback-based assessments. The most accurate picture combines behavioral and outcome-based methods rather than relying on any single tool.

What's the difference between a sales skill and a sales competency?

A skill is a specific, teachable behavior, such as asking open-ended questions or articulating ROI. A competency describes how well a rep applies multiple skills together with sound judgment in real sales situations. Competencies predict outcomes; individual skills alone do not.

How often should you run a sales skills assessment?

Run a formal assessment at onboarding to establish a baseline, then on a quarterly or semi-annual cadence for the broader team. Supplement these with ongoing AI-powered call scoring between formal reviews to catch skill gaps in real time and identify regressions early.

How do you act on sales skills assessment results?

Results should be cross-referenced with deal performance data, used to build competency-based coaching cohorts, and translated into targeted training paths. Assessments that don't connect to a coaching action plan produce data but not improvement.