Introduction

Most call analysis platforms promise AI-driven insights and deliver dashboards instead. Managers end up reviewing reports rather than coaching reps, feedback stays inconsistent across teams, and no one can clearly explain what separates top performers from everyone else.

The software isn't the problem, the evaluation process is. Too many teams buy on features rather than outcomes.

The stakes of this decision are higher than most leaders realize. Sales managers currently review less than 1% of all sales calls, creating massive blind spots in performance visibility. Meanwhile, companies that provide optimal coaching achieve 16.7% higher annual revenue growth than those that don't. The right platform closes that gap, but only when you're asking the right questions during evaluation.

This article presents 7 specific, high-signal questions that cut through vendor noise and help you identify a platform that truly connects call analysis to performance improvement and revenue outcomes.

TL;DR

- Call analysis platforms range from basic transcription to full conversation intelligence with AI scoring and auto-triggered coaching

- Focus evaluation on automation coverage, scoring objectivity, CRM integration, revenue correlation, and post-insight action workflows

- Most leaders overlook a critical question: Can the platform support partner and channel sales teams, not just direct reps?

- Ask vendors how they define implementation success, not just what features they offer

- Platforms that convert call insights directly into rep coaching actions drive measurable, repeatable performance gains

What Is Call Analysis Software?

Call analysis software records, transcribes, and evaluates sales conversations using AI to identify patterns, score rep behavior, and identify coaching opportunities. It's fundamentally different from basic call recording, which simply stores audio without interpretation or actionable insights.

The category spans a wide range:

- Simple transcription tools convert speech to searchable text, nothing more

- Full conversation intelligence systems score every call against defined frameworks, flag skill gaps, connect behavior to pipeline outcomes, and trigger automated coaching workflows

Where a vendor sits on that spectrum determines which of the seven questions below actually matter for your evaluation.

Why Call Analysis Has Become a Priority for Sales Leaders

Manual call review doesn't scale. Sales managers can realistically listen to only a fraction of calls, spending just 36 minutes per week coaching each rep, creating blind spots in performance visibility and coaching consistency. An overwhelming 77% of firms do not provide enough coaching to their salespeople. AI analysis closes that gap by enabling 100% call coverage without adding to manager workload.

That coverage shifts coaching from reactive to systematic. Instead of waiting for deal outcomes to reveal skill gaps, leaders can identify what winning reps do differently and replicate those behaviors in real time. Teams using hybrid AI-human coaching models see a 25% boost in sales performance, proof that AI's objectivity and manager judgment together outperform either approach alone.

7 Questions Sales Leaders Should Ask When Evaluating Call Analysis Software

These questions go beyond feature checklists. They reveal whether a platform is built for operational scale, coaching consistency, and revenue impact, or whether it's primarily a data reporting tool that shifts the burden back to managers.

Question 1: Does it automatically analyze every call, or only a sample?

Coverage completeness is foundational. A platform that requires managers to manually select calls for review reintroduces the same sampling bias that manual coaching creates. High performers get disproportionate attention, struggling reps go uncoached, and systemic gaps stay hidden. With less than 1% of calls currently reviewed, sampling perpetuates blind spots rather than eliminating them.

What to probe in vendor conversations:

- Does the platform analyze 100% of relevant calls automatically, without manual selection?

- Is call classification (discovery calls vs. demos vs. follow-ups) automated or manual?

- How does the system handle high call volumes without degrading analysis speed or accuracy?

- Are there call volume limits or throttling mechanisms that could create gaps in coverage?

Platforms offering complete, automated coverage eliminate bias and surface patterns that random sampling misses entirely.

Question 2: How does the platform define and score "what good looks like"?

Scoring objectivity separates consistent coaching standards from manager-to-manager variability. Inter-rater reliability is a well-established concern due to inherent subjectivity among human evaluators. If scorecards are generic, non-customizable, or entirely manager-defined, the platform cannot enforce a single performance standard across your team.

What to probe:

- Are scorecards built from analysis of your top-performing calls, or are they pre-loaded templates?

- How does the system ensure every rep is measured against identical criteria regardless of who reviews their calls?

- Does the scoring model evolve as win/loss data accumulates?

- Can you customize scoring frameworks to reflect your specific sales methodology?

AI eliminates evaluator bias and scores calls consistently, but only if the underlying framework reflects your definition of excellence. Demand proof that scorecards can be tailored to your sales process.

Question 3: Can it connect call-level behaviors to actual revenue outcomes?

Many platforms excel at call-level scoring but cannot answer the strategic questions that matter most to sales leadership: Which specific behaviors correlate with closed-won deals? Which competencies shorten sales cycles or increase average deal size? Without this revenue correlation, analysis remains tactical rather than strategic.

Research proves specific behaviors drive results. Top-performing reps maintain a consistent 43:57 talk-to-listen ratio across both won and lost deals, and multi-threading deals over $50K boosts win rates by 130%. The platform should surface these patterns in your own data, not just report generic metrics.

What to probe:

- Request a live demonstration of aggregate dashboards showing behavioral trend data tied to deal outcomes

- Ask whether the platform can identify which competencies (discovery depth, objection handling, multi-threading) have the strongest correlation with pipeline movement

- Request real customer examples showing how behavioral insights translated to revenue improvement

Question 4: How seamlessly does it integrate with your CRM and existing sales stack?

Call analysis without CRM context produces weaker, less actionable insights. A platform that automatically pulls deal stage, opportunity owner, account type, and pipeline value from your CRM can weight its analysis by deal context, making recommendations more relevant and reducing manual data work.

The integration payoff extends beyond insight quality. B2B sellers spend 70% of their time on non-selling tasks, with 9% of the average workweek consumed by manually entering customer and sales information. Deep CRM integration reclaims that time.

What to probe:

- Which CRM systems are natively integrated (not just API-available)?

- Is deal and contact data pulled automatically without rep or manager input?

- How does the platform handle data sync latency?

- What happens to call data if a CRM field is missing or incorrectly formatted?

Bi-directional syncing ensures call notes, next steps, and deal updates flow seamlessly, improving data accuracy while eliminating administrative burden.

Question 5: Does the platform turn insights into action, or just surface findings?

The "insight-to-action gap" is the most common failure mode of call analysis software. Platforms produce dashboards that managers review and forget. Without a defined workflow that converts a flagged gap into a coaching conversation, a training module, or a rep-specific action item, insights accumulate without driving behavior change.

The data backs this up: between 60% and 73% of all enterprise data goes unused for analytics, and 60-80% of business intelligence dashboards go unused or underutilized.

What to probe:

- What happens after a call is scored and a gap is identified?

- Does the platform automatically route the rep into a relevant training module?

- Does it notify a manager with a specific coaching prompt or create a development task?

- How quickly does the system respond, within hours, days, or only after manual review?

Platforms with built-in or natively integrated learning management capabilities close this loop more effectively than those relying on manual follow-up. When AI recommendations are followed within 24 hours, average sales cycles are reduced by 32.6%, which means speed of response matters as much as the insight itself. Pifini.ai's AI call scoring, for instance, automatically enrolls reps into prescriptive training when gaps are detected, cutting the lag between finding a problem and fixing it.

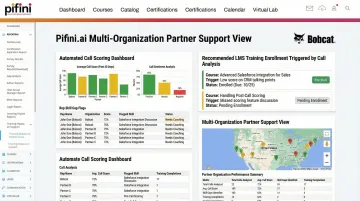

Question 6: Can it support partner and channel sales teams, not just direct reps?

Most call analysis platforms are designed for direct sales organizations, and that assumption creates a blind spot for channel-driven businesses. Most call analysis platforms are designed for direct sales organizations where all reps are internal employees on shared systems. But companies that sell through resellers, distributors, or channel partners face fundamentally different challenges: reps are external, call data is fragmented, and training deployment is more complex.

The stakes are significant. In the manufacturing sector, 35.3% of revenue is sourced through channel partners. Yet partners report that nearly 90% of their top challenges are related to partner enablement.

What to probe:

- Does the platform support multi-organization call analysis?

- Can external partner reps be onboarded, scored, and enrolled in training?

- How does the vendor handle data privacy and separation across partner accounts?

- Are there additional costs or technical limitations when deploying to channel partners?

For organizations with a channel motion, the answer to this question alone can eliminate most vendors from consideration. Platforms built from the ground up for extended enterprise use, like Pifini.ai, handle these requirements natively, rather than bolting on partner support as an afterthought.

Question 7: How does the vendor define a successful implementation?

This question is a reliable signal of vendor intent. Vendors focused on short-term deals define success as user adoption or number of calls analyzed. Vendors built for long-term partnership define success as measurable improvement in rep performance, faster ramp time, or increased win rates, metrics that require ongoing collaboration, not just software deployment.

What to probe:

- Ask for a specific description of the onboarding process: Who builds the initial scorecards? How long does it take? Who is involved?

- Ask what metrics the vendor tracks to assess whether their customers are achieving value

- Request two or three references from customers at similar company size and sales model who can speak to outcomes achieved, not just software features used

A vendor who answers with outcome metrics, win rate lift, ramp time reduction, rep retention, is structurally different from one who leads with deployment timelines. 67% of enterprise conversation intelligence implementations face significant adoption challenges, often requiring 6 to 12 months to deliver basic functionality. The vendor's answer to this question is your clearest signal of whether they'll be a deployment partner or a support ticket.

How Pifini.ai Answers Every One of These Questions

Pifini.ai is built on a straightforward principle: call analysis shouldn't stop at the insight. It should automatically connect what a rep does on a call to what they learn next. Unlike platforms designed exclusively for direct sales teams, Pifini serves both internal reps and partner ecosystems.

Key differentiators mapped to the 7 questions:

- Evaluates every call automatically and scores it against your defined frameworks, no manual selection required

- Tailors scorecards to your sales methodology for consistent measurement across all reps

- Flags skill gaps and auto-enrolls reps into targeted training through the built-in Enterprise LMS, closing the insight-to-action gap without manual intervention

- Guides reps during live calls via the AI Call Copilot, handling objections and navigating complex conversations in the moment, not just in post-call review

- Supports multi-organization deployments with native data separation, built for partner and channel ecosystems from the ground up, not retrofitted from a direct-sales tool

$50/User/Year vs. $300–$600 with Competitors

Those feature advantages only matter if you can deploy them at full scale. Pifini.ai's pricing, $50 per user per year, contrasts sharply with enterprise competitors like Seismic, Mindtickle, and Bigtincan, which typically charge $300–$600 per user annually.

That 10x cost difference lets organizations roll out call analysis across direct and partner teams without the sampling compromises that high per-seat costs force. When budget constraints push teams to analyze only a subset of calls or exclude partner reps entirely, the platform's value drops sharply, Pifini's pricing removes that constraint entirely.

Conclusion

The best call analysis software doesn't win on feature count, it wins by closing the loop between what happens on a call and what changes in a rep's behavior the next day. These 7 questions give you a framework grounded in outcomes:

- Coverage completeness, does it capture every call across every channel?

- Scoring objectivity, is evaluation consistent and bias-free?

- Revenue correlation, can it tie call behavior to pipeline results?

- CRM integration, does insight flow into the tools reps already use?

- Insight-to-action workflows, does it trigger coaching, not just reports?

- Partner support, does it extend to your channel, not just direct sales?

- Vendor implementation philosophy, will they help you use it, or just sell it?

The gap between platforms that analyze calls and platforms that act on that analysis is already widening. Sales leaders who pressure-test vendors on both dimensions today build coaching infrastructure that compounds, quarter over quarter, rep over rep.

Frequently Asked Questions

What questions should sales leaders ask when evaluating call analysis software?

Focus on seven key areas: whether the platform analyzes 100% of calls automatically (not just samples), how it ensures scoring objectivity across reps, whether it correlates behaviors to revenue outcomes, how deeply it integrates with your CRM, what automated actions follow from insights, whether it supports partner and channel teams, and how the vendor defines implementation success beyond basic deployment.

How do you evaluate a sales call?

Sales call evaluation measures specific behaviors, such as talk-to-listen ratio, discovery question quality, and objection handling, against a defined scoring rubric. AI-driven platforms automate this at scale, replacing manual spot-checking with consistent, objective analysis of every conversation.

What is the difference between call analysis software and conversation intelligence software?

The terms overlap significantly, but conversation intelligence typically implies broader capabilities: sentiment analysis, topic detection, competitor mention tracking, and deal risk signals. Call analysis often refers to narrower transcription and scoring functions without the strategic layer that connects behaviors to revenue outcomes.

Which tools help analyze sales calls and improve sales techniques?

The category includes platforms like Gong, Chorus (ZoomInfo), Salesloft, and AI-native enablement platforms like Pifini.ai. Evaluate not just the analysis capability but whether the tool connects findings to coaching and training workflows, because insights without action don't change rep performance.

How does call analysis software improve sales rep performance?

Performance improvement comes from the actions that follow analysis: consistent feedback, targeted skill development, and replicating top-performer behaviors at scale. Platforms that automatically trigger coaching interventions and training enrollment based on call data produce measurable lift in win rates and ramp time. Reporting alone does not.